Ugurcan Caykara

Hey everyone. The State of FinOps 2026 report has been released. 6th annual survey, 1,192 participants, over $83 billion in annual cloud spend represented. I want to walk through what the numbers are telling us and why they matter if you're working anywhere near Kubernetes infrastructure.

Whether you're on an infrastructure team, a software team, or leading one, it doesn't matter. At the end of the day, no matter how advanced the systems we build, there's one truth that overrides everything: none of it matters when the company is bleeding money. And the cost cuts that follow tend to hit in ways you might not expect.

Let's be real

When cloud costs go unchecked, the impact shows up from multiple directions:

- From the employer's side: budget problems. To keep margins healthy, cuts start showing up in other areas: perks, events, team activities, and sometimes even raises.

- From the employee's side: you won't see the cloud bill, but you'll feel what it causes. Budget constraints push good engineering practices to a lower priority. Tooling gets deprioritized, tech debt compounds, and the improvements you know are possible keep getting pushed to next quarter. It blocks potential that could have been even better.

- From the cloud itself: cloud is genuinely fantastic. The shared responsibility model takes a massive amount of operational overhead off your plate, and the pay-as-you-go model lets businesses move fast. But that same flexibility has a flip side. If you're not actively optimizing, if you're provisioning generously and nobody's watching the bill, it will catch up with you. Cloud doesn't punish you upfront. It lets you run, and then the invoice arrives.

Where cost optimization happens

So where does the waste actually come from? There are several areas where you can potentially optimize costs across your infrastructure (on-premise, cloud, or hybrid):

- Over-provisioned containers

- Abandoned workloads

- Orphaned resources

- Under-utilized node groups

- On-demand instance types when spot would work

- Data transfer costs (egress, cross-AZ)

- Idle GPU workloads

We cover these topics in depth across two dedicated series: the FinOps Framework series that walks through the framework conceptually, and the Kubernetes Cost Optimization series that goes deep into the technical practices, from workload rightsizing to node group optimization and beyond.

Why FinOps became this important

Your Kubernetes cluster scales beautifully. Your costs don't. That gap is exactly what the FinOps framework exists to close, and it's a framework I personally follow closely. The functions that drive Kubeadapt are built around it. The adoption curve is accelerating, and it's not hard to see why.

A cost optimization tool's core value isn't just about showing you potential optimization opportunities. Even the reporting function alone, cost dashboarding, can be extremely valuable on its own. Why? Companies don't just want to see their IT infrastructure costs. They want to see the developer's license fees, the HR system's internal tool costs, and a whole range of other external costs, all inside the tools that adopt the FinOps framework. Why? Because by using these as long-term reporting tools, they can reshape their budgeting strategies: where to invest, how to invest, and whether they've managed to turn waste into a reusable resource.

Before FinOps, managing all this was painful because there was no shared framework. Each role had its own spreadsheets, its own dashboards, its own version of the truth. Now, with FinOps as the common language, CFOs, CTOs, and power-users such as Platform or DevOps engineers can all get their different needs met from the same framework. The cost we used to be blind to is now gradually becoming part of one or more stages of the development lifecycle, alongside cost optimization products.

Key numbers from State of FinOps 2026

The biggest shift is AI. The percentage of companies actively managing AI spend jumped from 31% to 98% in just two years. That's not a trend, that's a landslide. And the pressure behind it is straightforward: optimize what you're already running so you can afford to invest in what's coming next. "Fund your AI investments by saving on existing cloud spend", that's the mandate a lot of teams are operating under right now.

FinOps isn't just about cloud anymore, either. The scope has expanded well beyond the cloud bill:

- 90% of FinOps teams now manage SaaS costs (think Slack, Jira, Datadog)

- 64% manage software licenses

- 57% private cloud, 48% data centers

- Even 28% are starting to cover workforce costs

It's becoming the entire technology spend.

The easy wins? Mostly gone. Teams already went through the obvious stuff: shutting down idle servers, buying reserved instances. What's left is smaller, harder, and way more labor-intensive. The conversation is shifting from "how do we cut costs" to "are we actually getting value from what we spend?" Unit economics, cost per customer, cost per transaction, these are the metrics moving to the foreground.

78% of FinOps teams now report directly to the CTO or CIO, up 18% year over year. When you have VP-level engagement, these teams become 2-4x more influential in actual technology decisions: which cloud provider to use, where to run workloads, what services to adopt. A completely different level of impact compared to a few years ago.

Then there's shift-left. Teams want engineers to estimate costs before deploying. "If we build this architecture, how much will it cost per month?" Great idea, but there's a real measurement problem. Say a developer catches an over-provisioned request in a PR and drops CPU from 4 cores to 1. That saves $300/month. But the wasteful version never hit production, so the saving doesn't show up anywhere. No cost went down because the cost never went up. The evidence disappears the moment the fix happens. That's a gap most teams don't have an answer for yet.

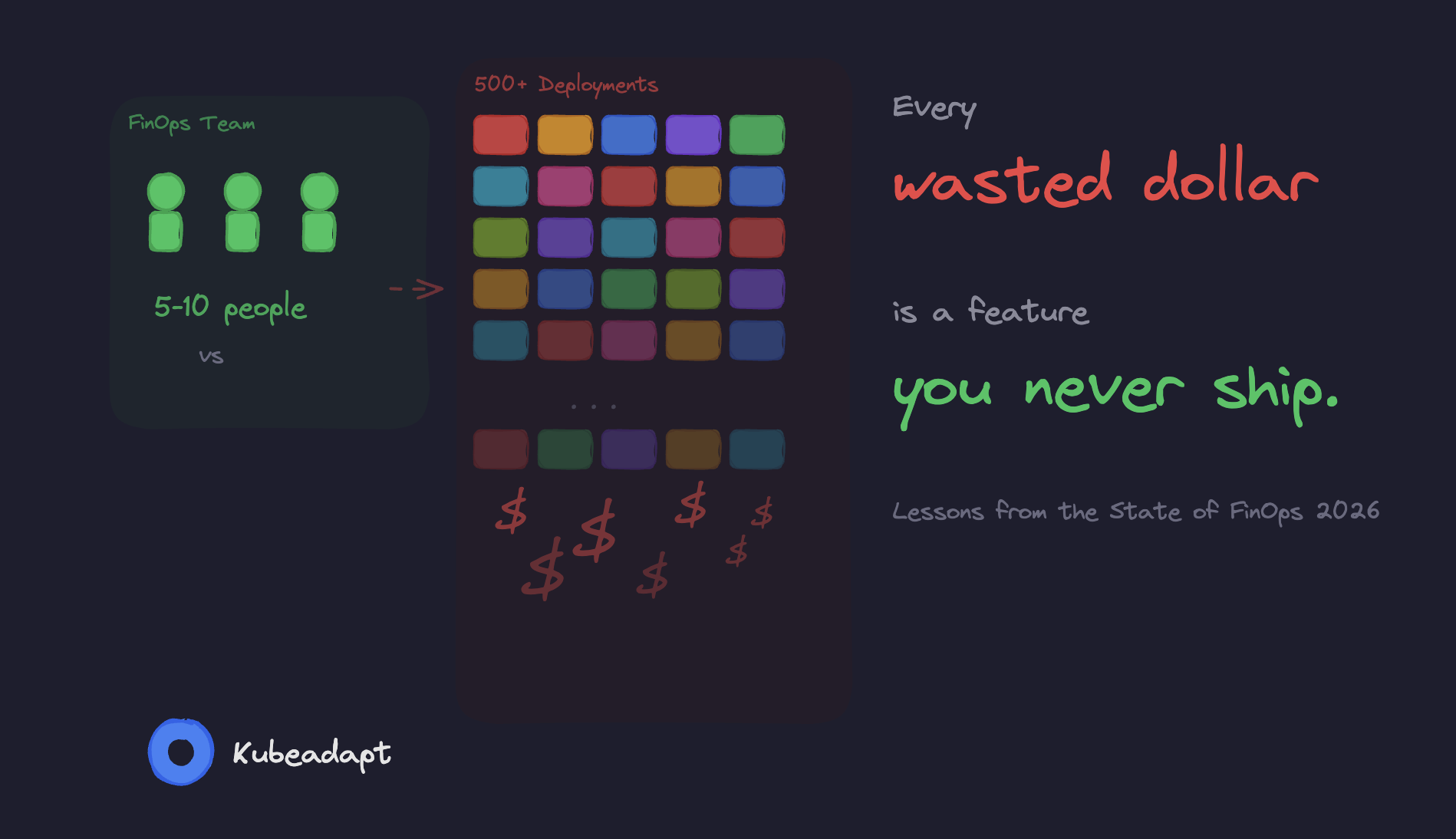

And here's what ties it all together: even companies spending $100M+ on cloud have FinOps teams of only 8-10 people. They don't scale by hiring more. They scale by setting central standards and placing "FinOps champions" across the organization.

The FinOps Foundation actually changed its mission from "Advancing people who manage the value of cloud" to "Advancing people who manage the value of technology." That tells you everything about where this is heading.

The scaling problem

Here's where it gets real. FinOps teams at enterprises are 5-10 people. At some companies, they don't exist at all. But even when they do, scaling FinOps across IT is not something 5-10 people can pull off. Because the optimization here, especially optimization that doesn't degrade performance, requires expertise across multiple layers. From workload optimization to node group optimization. Even if you manage to find people with the right skill sets, applying these improvements with 5-10 people across companies where 500, 1,000, or 2,000 people are working becomes an incredibly difficult situation.

Two approaches to managing this

1. Full automation

Automating the optimization processes end-to-end. There are vendors in the market doing this. You'll probably come across them if you look around a bit. But there's a problem: being this deeply tied to a vendor, connected at the skeletal level, directly at the cluster node autoscaler, is not a vendor-lock you can easily walk away from one day.

On top of that, you'll need to shift your focus to audit trail mechanisms that sit outside industry standards. What do I mean by that? For example, if you're applying GitOps patterns in Kubernetes and managing your cluster state in git repositories, it's completely natural to have reservations about integrating a tool that talks directly to the Kubernetes API and makes changes at that level. I won't go into the pros and cons here, but this was the first approach.

2. Bringing cost metrics down to the team level

Let's go back to our 5-10 person FinOps team. How long do you think it would take them to drive these optimizations across 500-1,000 deployments managed by 1,000 developers? While also carrying the coordination overhead, and dealing with a manager asking "why is this moving so slowly, why aren't costs dropping as expected?"

An argument can certainly be made, but the obvious problems are already visible:

- You need to sit down with the developer team and decide whether their application is CPU-intensive or memory-bound. Oh wait, before that, you need to coordinate with the team and schedule the meeting at the right time. Just managing these back-and-forth logistics is extra work. And when you factor in sick leaves, vacations, and developers with completely different priorities, it becomes unmanageable.

- In a 1,000-person organization, hopefully there's an internal developer platform. Otherwise, without systematic and organized service ownership tracking, I don't even want to think about the delays that would happen while trying to figure out who owns which service and who's responsible for what.

- Remember the "FinOps champions" concept from the report? There's an underrated version of this: incentivize the people who actually drive savings. Say a team proves $100K in annual savings through rightsizing. If the company gives back 1-2% of that as a reward, a bonus, a gift, whatever fits the culture, you'd be surprised at the chain reaction it creates. People start paying attention. Other teams want in. The optimization work stops feeling like a chore imposed by the FinOps team and starts feeling like something worth doing. But here's the catch: none of this works unless you can actually track savings per team, per department, per service. You need that attribution layer in place first. That's one of the reasons we built Teams & Departments into Kubeadapt.

Sure, these situations can happen at any company. But the point isn't to fix organizational dysfunction. It's that when these kinds of processes are the norm, you need a tool that works within the reality of how your teams already operate, not one that demands a perfect organization before it can deliver value.

What's next

This post is the entry point for two dedicated series:

FinOps Framework Series

A walkthrough of the FinOps Framework itself: principles, scopes, personas, phases, maturity, domains, and capabilities. If you want to understand how to build and scale a FinOps practice in your organization, this is your path.

Kubernetes Cost Optimization Series

The technical deep-dive into Kubernetes cost optimization practices. The first topic is workload rightsizing: requests, limits, OOM kills, CPU throttling, autoscaling strategies. More topics like node group optimization and abandoned workloads will follow over time.

If you want to see more on these topics, follow along. I hope this was a useful read, and I'll see you in the next one.

If you want to see workload rightsizing in action, Kubeadapt installs in under a minute via Helm, reads directly from the Kubernetes metrics-server, and delivers per-workload rightsizing recommendations with exact dollar savings.

Ugurcan Caykara

Sources & References

Further reading and cited materials

See Your Savings Potential

See exactly where your cluster spend goes and get actionable rightsizing recommendations in minutes.

10-minute setup · Works with EKS, GKE, AKS, and on-premise